GOAP¶

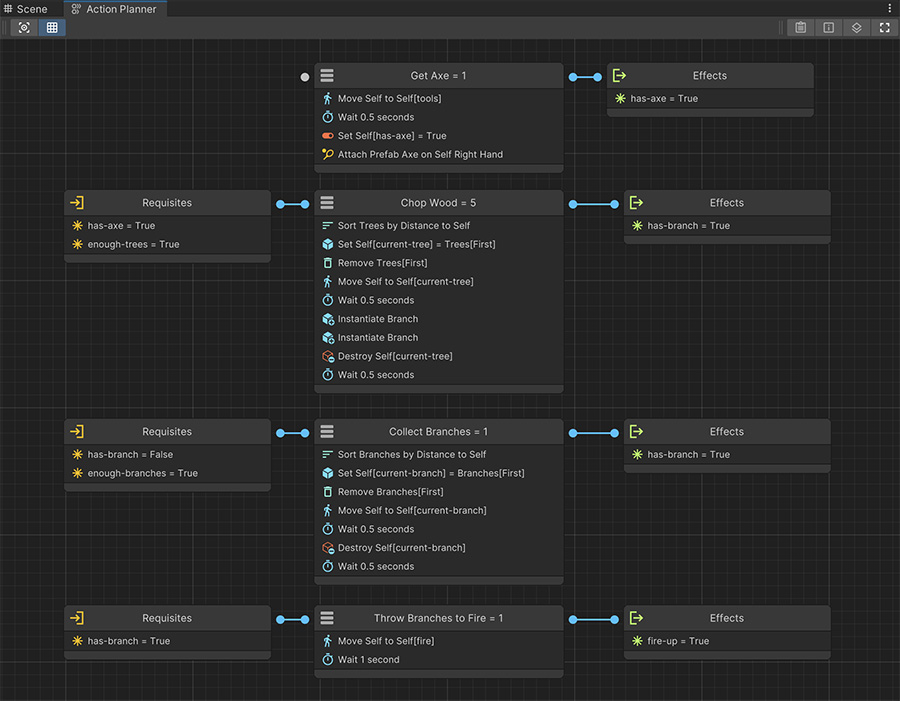

GOAP systems, also known as Goal Oriented Action Planning, are an AI system that automatically builds Plans based on a list of Requisites and Effects that affects the agent's Beliefs.

The Goal of a GOAP system is to build Plan that changes the current Beliefs into one that satisfies a specific Requisite.

- Plan: A sequence of nodes executed in order to achieve a goal.

- Beliefs: The current knowledge if the agent about the state of the world.

- Requisites: A collection of boolean conditions that need to be satisfied to run a node.

- Effects: A collection of changes that occur after executing a node.

Similar to Domino

The best analogy to this AI system is by comparing it to the Domino game. Each piece has a number of dots on one side (which would be the Requisites) and a number of dots on the other side (Effects).

The Beliefs would be current number of dots required after placing a piece next to another one, and the Plan the sequence of pieces placed in order.

The beauty of GOAP is that you can add as many nodes as you want without drawing connections between them and the AI system will come up with the best plan possible. This has the drawback (or an advantage) that it may come up with a plan that isn't foreseen by the game designer.

If there are multiple possible Plans the AI system will always prioritize the one that has the lowest overall Cost.

Simple Example

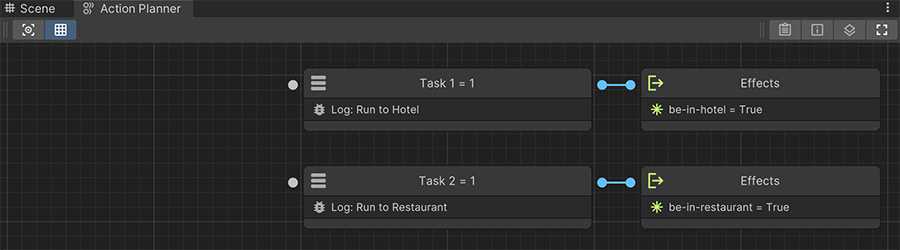

Let's say we have the following Action Plan and we ask it to build a Plan that satisfies the Goal: be-in-hotel.

There are two nodes, both of which have zero Requisites but both have an Effect:

- The first one satisfies

be-in-hotel - The second one satisfies

be-in-restaurant.

If we ask the Action Plan to build us a Plan it would give us the first node, because running it satisfies the Goal be-in-restaurant.

A more complex Example

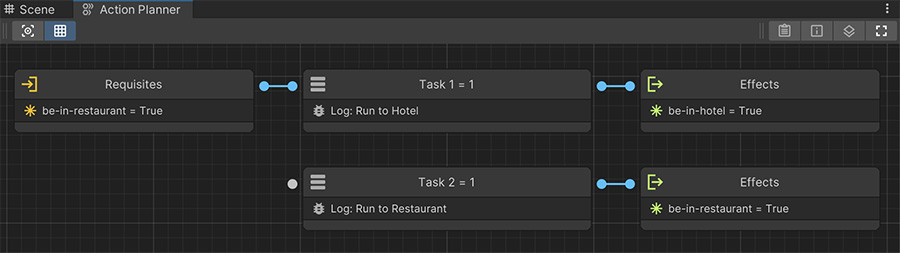

Let's imagine we now have the same case scenario, but in order to enter the Hotel we need to walk through the Restaurant. This can be represented by adding the Requisite be-in-restaurant on the first node.

If we ask the AI system to build us a plan, it will quickly realize the plan can't be made of a single node, because it requires be-in-restaurant to be satisfied. However, the second node satisfies this condition, and thus it will give us the Plan sequence: Task 2 → Task 1

Thoughts¶

Beliefs are the agent's local knowledge about the world state at any given time, which can be transformed applying Effects in order to reach a goal.

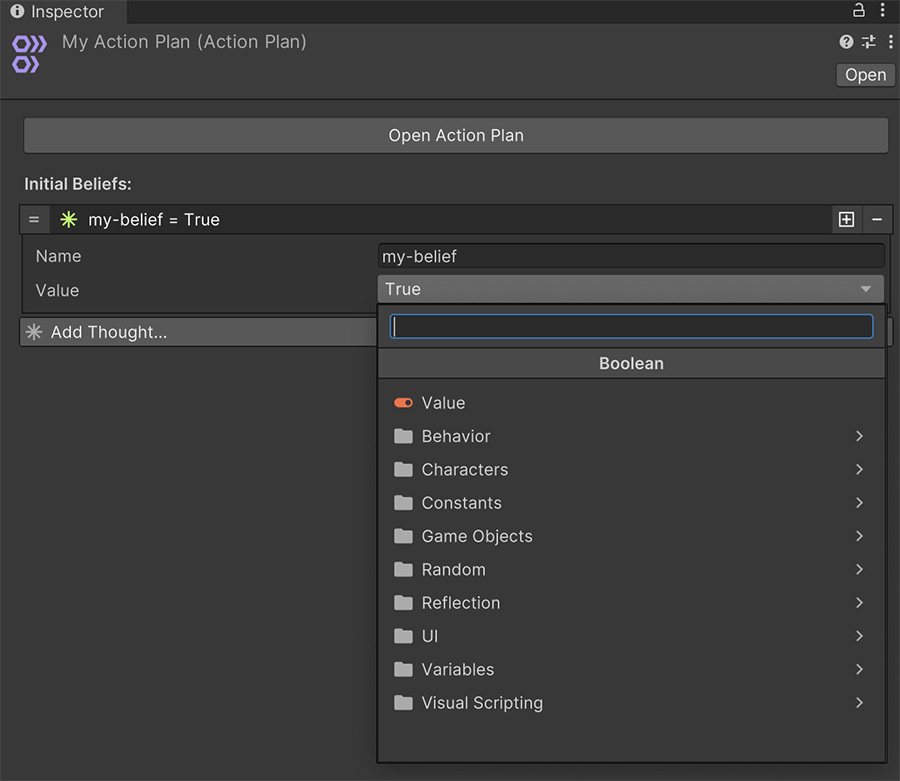

By default when an Action Plan attempts to build a Plan it starts with a blank slate of Beliefs. However we can change that by giving it some default values whenever the plan starts being calculated.

To do so, select the Action Plan asset from the Project Panel and click on the Add Thought button. Thoughts are the initial values of an agent's Beliefs.

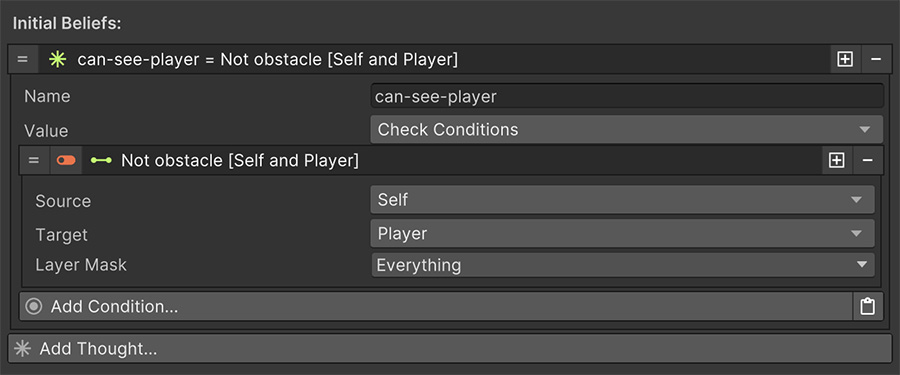

I am thinking whether I see the Player or not

For example, we could have the initial Belief (or Thought) of whether the agent can see the player or not. To do so we can use the Visual Scripting boolean option of a Condition and check whether there's an obstacle between the Player and the Self (the agent itself).